Singapore is hot. Even though I grew up in Jamaica, I’ve been in the northeast USA for 19 years (!!!) and can confidently say I forgot how to manage the heat. So, I’ve decided to sit in the nice hotel air conditioning in the middle of the day to bring you a fresh recap of ROSCon 2025.

I was long awaiting ROSCon 2025 firstly because, despite my complaints about the heat, Singapore is an exciting destination and I wanted to eat all the food. Secondly, and more relevant to this post, I was involved in about as many things as possible for the conference. I served as a reviewer for both submitted talks and diversity scholarship candidates, had a workshop accepted with my ROS Deliberation Community Group collaborator Christian Henkel, represented the RAI Institute for the keynote (!!!) talk with my coworker Tiffany Cappellari, and even snuck in a lightning talk on a personal project I’ve been doing behind the scenes.

Throughout the rest of this post, I’ll break down some of the work that led to so much air time at ROSCon 2025, along with a mountain of unsolicited personal opinions. Enjoy!

Photo taken from the official ROSCon 2025 web site.

On Combating the AI Hype Cycle

In last year’s ROSCon post, I lamented that all the AI talks were centered around LLM wrappers for “agentic AI” workflows. While this direction of applying pre-trained models towards robotics is still going strong, I was hoping to see more content on the uses of machine learning closer to the robots themselves. Fortunately, I found myself with a handful of great opportunities to be the change I wanted to see.

Right after ROSCon 2024, I already had the idea floating in my mind to submit a talk that was more geared towards end-to-end robot learning workflows. Given that we are using ROS on several of our projects at the RAI Institute, my colleague Tiffany Cappellari and I decided to put together a proposal. While I work on the manipulation team, which primarily uses Franka robots, Tiffany is a software engineer that has directly maintained the RAI Institute’s open-source Spot ROS 2 tooling, and in fact gave a talk about this last year. We figured we could join forces and submit a talk that more broadly covered our use of ROS at the RAI Institute. We were worried that since we weren’t discussing any other open-source tools of our own, our chances of acceptance would be slim, but what we didn’t know is that the Open Robotics Software Alliance (OSRA) had been cooking up their own plans that worked really well with the content of our talk.

So, we got the keynote talk. No pressure.

Photo courtesy of Marq Rasmussen.

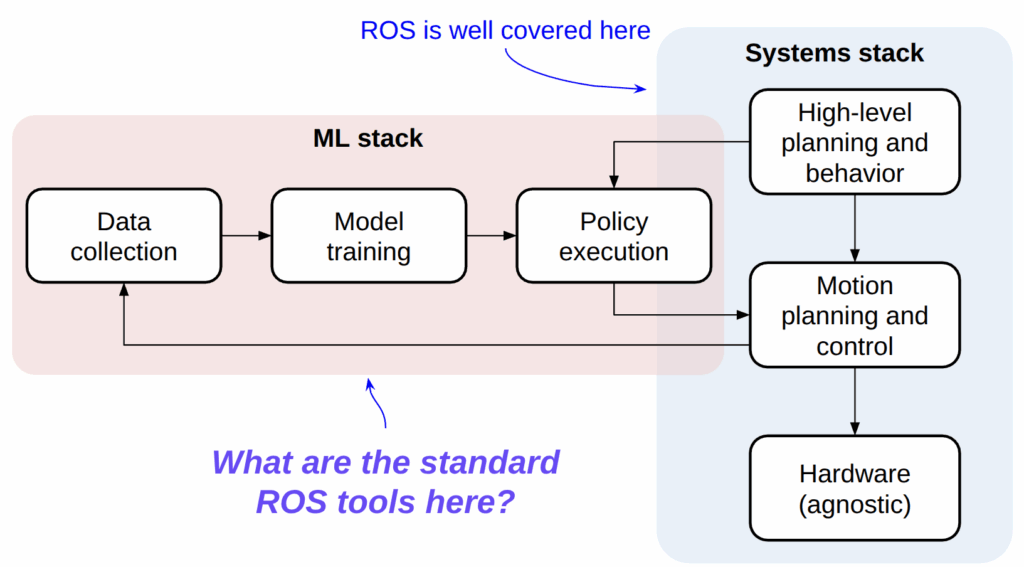

Our talk basically delivered a one-two punch that can be summarized by the diagram below. Despite its flaws (which any software will have), ROS is pretty good for standing up multi-language, multi-process software systems that can work on a variety of robot hardware platforms. At the same time, as robot learning tools are rapidly entering the mainstream — most notably, frameworks like LeRobot, Neuracore, and Ark and middleware alternatives like Dora and Copper — ROS is finding itself pressured by newcomers who can move quickly due to more modern software tooling and simply having less technical debt from years of existing. We discussed what has worked for us, and where we had to fill in some gaps.

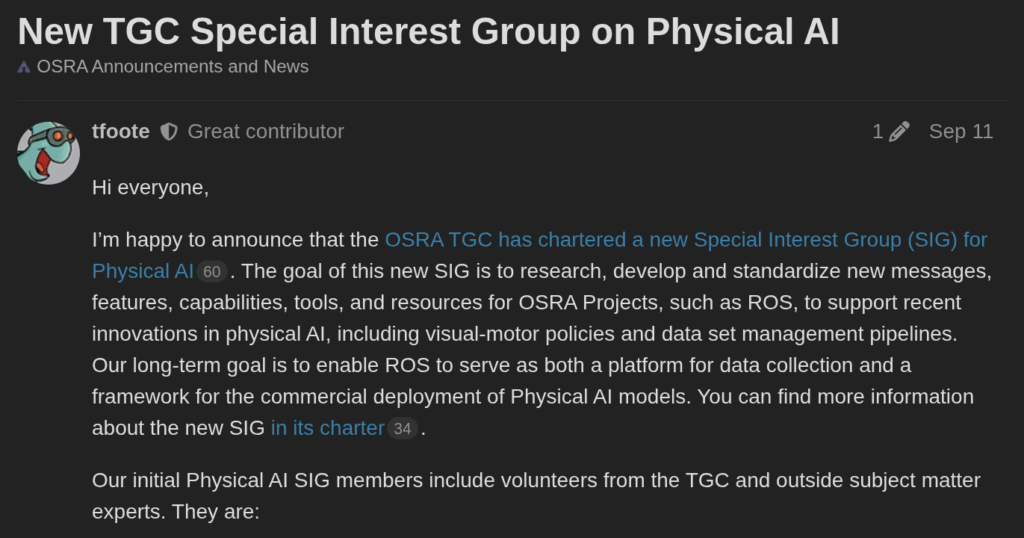

It turns out that the reason we landed the keynote was that many of the things that kept us awake about our ROS usage for end-to-end learning were things that likely did the same for the OSRA. After our talk was accepted, I was contacted by Tully Foote and Yadu Vijay from Intrinsic about a newly forming special interest group around ROS for Physical AI, which we (the RAI Institute) now participate in alongside several other organizations suffering from our same woes. Indeed, it was both hilarious and reassuring that many of the presentations and meetups at ROSCon 2025 echoed the same sentiments that were in our talk. It’s a big deal that so many companies are solving the same problem in our own sandboxes, and this opportunity to actually talk to each other could work out really well for the overall community if we are able to extend our talking into actual work going forward.

A tangential contribution to fighting the AI hype beast came from the ROS Deliberation Community Group that I have been co-moderating with Christian Henkel since he asked me to help out after ROSCon 2024 a year ago. Last year, Christian gathered up 6 (yes, six) members of this group — myself being one of them — to deliver a massive full-day workshop on deliberation technologies such as state machines, behavior trees, and task planning. This year, Christian and I together decided to explore the less “old-school” path of reinforcement learning (RL) with applications towards high-level decision making… and despite putting together an extremely last-minute proposal (don’t tell the organizers!), we also got accepted for a half-day workshop! Now there was even more pressure.

This turned out to be an interesting workshop because the ROS part was actually pretty minimal. Most of the content was centered around introducing newcomers to RL by giving them a crash course on the theory, as well as a chance to try practical things like tuning reward functions, switching algorithms, and evaluating policies… but all in the span of 4 hours. As some of you who have tried RL might know, 4 hours is often not even sufficient to finish a single training run for any realistic problem, so Christian and I spent a lot of effort finding the right balance between picking a non-trivial problem and giving attendees the chance to iterate. Our ticket here was leaving 45 minutes at the end of the workshop to discuss with the audience that our problems were simplified for educational purposes and giving them lots of resources and time for Q&A to understand what “real” applications of RL might look like.

You can ask folks who attended what they thought, but I am happy with how this turned out. The repository and slides are all openly available on GitHub, so you should take a look! https://github.com/ros-wg-delib/rl_deliberation

Samuel Oyefusi, Christian Henkel, me, and Samuel Obiagba.

Photo courtesy of Samuel Oyefusi (LinkedIn).

Motion Planning for the People

Besides the whole AI thing, which is mostly circumstantial, I am a motion planning person. I previously worked on a product built on top of the open-source MoveIt 2 package, and always found it exciting to find ways to contribute fixes from the product development back upstream for the community to benefit. Building off this interest, I became a maintainer for MoveIt through small contributions of my own, but also through mentoring for programs such as Google Summer of Code (GSoC).

I’ve spoken about all of this before in previous posts, so let me not repeat myself too much. At ROSCon 2024 last year, my GSoC collaborators Sebastian Jahr and Aditya Kamireddypalli got a talk accepted for their work, so I got to hang out them in Denmark. However, I had never met my mentees from the previous year (2023) because they were on the other side of the world. Now that we were in south-east Asia, though, and both these mentees happen to have full robotics careers going these days, I was fortunate to meet both Mohamed Raessa (based in Japan) and V Mohammed Ibrahim (based in India) in person for the first time in one go! You just don’t get opportunities like these every day, and this is exactly why conferences rotate locations.

Mohamed Raessa (left) and V Mohammed Ibrahim (right).

Photo courtesy of Mohamed Raessa.

Alright, so let’s get back to the MoveIt / motion planning side of things. Once I left my previous employer I didn’t really find myself using MoveIt, but at ROSCon 2024 I realized that there were still loads of people using the software! This gave me a burst of motivation a year ago to take up the reins of maintaining MoveIt, which led to quite a lot of work digging it out of its half-working state, reviewing community pull requests, cutting releases, and so forth. However, I got burned out in a matter of a few months, as I sat there asking myself why I was spending my precious free time maintaining a tool I didn’t even use. There was no joy in the small tweaks and fishing through complexity, even though there certainly was appreciation by the community.

In parallel, over the last year I had gone on my own educational adventure for motion planning, which culminated in my massive How Do Robot Manipulators Move? blog post and the accompanying software, PyRoboPlan. At the same time, I’d been licking my wounds over a failed attempt at work to develop a comprehensive motion planning stack that didn’t catch on due to its complexity and consequent unwillingness by most teammates to figure it out. Sound familiar?

Surely there was a way for me to gather up all these feelings and put them towards a more constructive use of my time?

In May 2025, I removed myself from the MoveIt maintainer group mostly for my own mental well-being. If I didn’t have any permissions to the code, I would no longer be able to feel guilty about its state and spend my spare time fixing it because nobody else was going to. I also stopped trying to maintain PyRoboPlan and the work-related motion planning stack. My personal time went towards a project that more than anything would convince me that it was possible to take my experiences and make something that people might actually want to work with. Over the next few months, I tried to make something that

- Was written in C++ for performance, but had Python bindings for hackability and visualization.

- Was not entangled with ROS or other middleware, to facilitate ease of use, testing, and development of the above Python bindings.

- Could actually do enough to be useful, and welcomed people who are smarter than me to make their own algorithmic contributions.

I took the same model as PyRoboPlan, in which I heavily relied on the Pinocchio library to represent robot models and their kinematics/dynamics. However, this time I worked with their C++ API. My coworker had told me about nanobind, the successor to pybind11 by the same author, and that was a good choice because nanobind is super easy to use. I also started playing around with the Viser visualizer that other colleagues exposed me to. This was also a good choice because PyRoboPlan relied on the dated Meshcat software, and Viser is a similar, but more modern and actively maintained alternative.

Fast forward to ROSCon 2025, where we were able to get a sufficiently working proof-of-concept that I decided to submit a moderately spicy lightning talk that that began with a question to the audience: “do you find yourself yearning for an open-source motion planning library that is easy to use and actively maintained?”. This talk introduced our new tool, RoboPlan, to the community, and I think we got a lot of interest!

This was not a solo effort, by the way. I want to give shout-outs to:

- Erik Holum, for doing a ton of PR review and sanity checking, but also actively working on examples and the ROS interface to RoboPlan, which will be soon released.

- Jafar Uruç, for also providing a lot of encouragement and points of reference through his previous work, as well as adding Pixi support to Roboplan.

- Catarina Pires, for being an early tester and initial contributor, as part of a mentorship program we have been working together on.

- Brent Yi, for being a super responsive maintainer of Viser and helping me with both the official Pinocchio viser visualizer I added, but also with a gnarly reference leak that nanobind discovered.

- The Pinocchio maintainer team, for making an amazing piece of software, but also fixing all the recent issues with mimic joint support and helping shepherd the 3.8.0 release that includes all the fixes and features I mentioned above! I look forward to your 4.0 release which adds nanobind support, because this is going to be huge for seamless interoperability with Roboplan.

This also will not be able to continue as a solo effort. I think we’ve done a reasonably good job making an initial release that balances the capabilities with ease of use, but there is a lot of work left to make this usable for meaningful applications. I cannot promise that has all the functionality you need, but I can promise that I will help integrate new functionality in and work towards making this a motion planning framework that people want to use. If you are interested in contributing, please reach out to me or start poking around the repository at https://github.com/open-planning/roboplan.

Concluding Thoughts

The community is easily the best part of ROS. There is a lot of hate for ROS because of all the baggage it carries due to its age compared to more modern tools — and a good portion of it is honestly warranted — but the message at ROSCon 2025 was clear all the way up to the Open Robotics board level. This includes

- The OSRA Special Interest Group in Physical AI, which I already mentioned.

- Efforts to modernize and ease installation and packaging, such as Pixi and RoboStack (which we used in our RL workshop, by the way).

- Efforts to improve middleware issues by continuing to put effort into Zenoh as a Tier 1 alternative to DDS.

- Rust.

rclrslooks really cool and well-featured.

I am delighted that the community is no longer pretending that ROS is a magical tool that will solve all problems, especially in the wake of new data-driven techniques for programming robots. However, there are very talented engineers around the world that gather in places like these to figure out how to fix the problems in open-source software, rather than just complaining and/or further fragmenting the community. It’s a delight to be surrounded by people who are willing to go out of their way to help others instead of being singularly focused on their own bottom line, regardless of how this ROS thing shakes out down the road. Thank you all for giving me a feeling of belonging in a time where I’m struggling to find it back home. Until next year in Toronto!

Photo courtesy of Stephanie Eng.

… oh, and one more meme just to make fun of Davide.

2 thoughts on “ROSCon 2025: Highlights from Singapore”